How AI Understands Styles and Textures

Artificial intelligence has become remarkably skilled at generating images that appear stylistically coherent and texturally rich. From painterly brushstrokes to photorealistic surfaces, modern AI systems can recreate visual qualities that humans intuitively recognize as “style” or “texture.” Yet this ability does not come from taste, emotion, or subjective appreciation. It emerges from mathematical representations, data patterns, and statistical learning. Understanding how AI interprets styles and textures helps demystify AI-generated art and clarifies both its strengths and its limitations.

What “style” and “texture” mean in visual terms

Before examining how AI processes visual information, it is useful to define the terms in a technical sense.

Style generally refers to higher-level visual patterns that remain consistent across multiple images. These include color palettes, contrast levels, brushstroke behavior, compositional tendencies, and recurring shapes. Style is not tied to a single object but to the way objects are represented.

Texture refers to lower-level surface properties. It includes fine-grained visual details such as grain, roughness, smoothness, fabric weave, wood patterns, or the subtle irregularities of paint on canvas. Texture is often local and repetitive, yet it plays a major role in how realistic or expressive an image appears.

Human perception naturally separates these concepts, but for AI, both are ultimately patterns of pixels with different spatial relationships.

Images as numerical representations

AI systems do not “see” images as pictures. Instead, every image is converted into numerical data. Each pixel becomes a set of values representing color intensity, usually across red, green, and blue channels. When images are processed by neural networks, these pixel values are organized into multidimensional arrays.

From this raw data, AI models learn to identify relationships between pixels. Edges, gradients, color transitions, and repeating motifs all become measurable patterns. What humans interpret as texture or style emerges from how these numerical relationships repeat and interact across many images.

The role of neural networks in visual understanding

Most modern AI art systems rely on deep neural networks, particularly convolutional neural networks and diffusion-based architectures. These networks are designed to recognize patterns at different levels of abstraction.

Early layers focus on simple features:

- Edges and outlines

- Color contrasts

- Basic shapes

Deeper layers combine these features into more complex structures:

- Repeating surface details

- Characteristic brushstroke patterns

- Global color harmony and composition

By stacking many layers, the network gradually builds an internal representation that separates content (what is depicted) from style (how it is depicted), even though both originate from the same pixel data.

How AI learns visual styles

AI learns style through exposure to large datasets. During training, the model processes millions or billions of images and adjusts its internal parameters to reduce prediction errors. Over time, it learns statistical regularities associated with specific visual approaches.

For example, if many images share:

- Muted earth tones

- Soft lighting

- Visible brush textures

the model begins to associate these features with a coherent style. Importantly, AI does not store styles as fixed templates. Instead, it encodes them as probability distributions over visual features.

When a user requests a specific style, the model draws from these learned distributions to guide image generation. The result is not a copy of a single artwork but a new image that statistically aligns with the learned characteristics.

Texture as a spatial pattern problem

Texture is particularly well-suited to AI learning because it often involves repetition and local consistency. Neural networks excel at identifying patterns that recur across small regions of an image.

Textures are captured through:

- Spatial frequency (how often details repeat)

- Directionality (aligned fibers, brushstrokes, or grain)

- Variability (natural imperfections versus uniform surfaces)

By analyzing how pixel values change relative to their neighbors, AI builds a sense of surface quality. This is why AI-generated images can convincingly depict materials like stone, skin, fabric, or metal, even though the model has no physical understanding of those materials.

Feature maps and internal representations

Within a neural network, images are transformed into feature maps. These are intermediate representations that highlight specific visual properties. Some feature maps respond strongly to sharp edges, others to color gradients, and others to repetitive textures.

Style-related feature maps tend to activate across larger areas of an image, reflecting global consistency. Texture-related feature maps activate in smaller, localized regions, capturing fine detail.

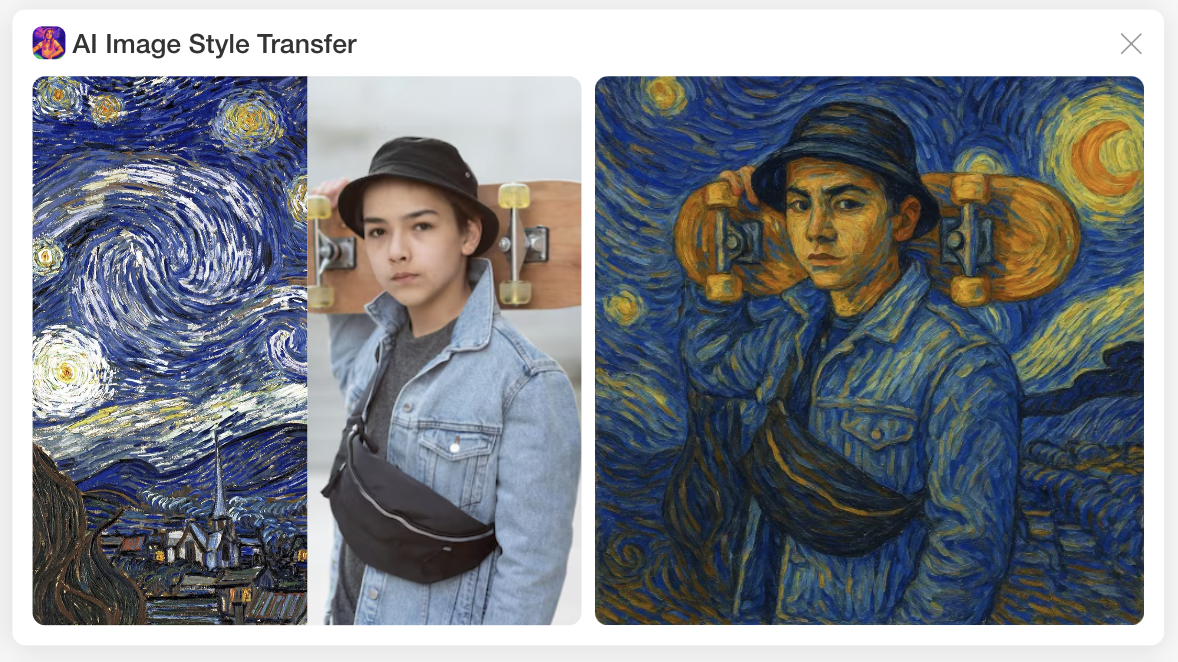

This separation allows AI systems to manipulate style and texture independently to some degree. It is also the foundation for techniques such as style transfer, where the content of one image is combined with the style of another.

Diffusion models and progressive refinement

Many contemporary AI art tools rely on diffusion models. These systems generate images through a gradual refinement process. They start from random noise and iteratively adjust pixel values to match learned visual patterns.

During this process:

- Early steps establish general composition and color balance

- Middle steps define objects and stylistic coherence

- Later steps refine textures and fine details

This layered approach mirrors how style and texture are represented internally. Style emerges as a guiding constraint early on, while texture is sharpened as the image becomes more detailed.

Why AI styles feel consistent but not intentional

AI-generated styles often feel coherent, yet they lack intention. This is because consistency arises from statistical averaging rather than conscious choice. The model optimizes for patterns that are most likely given the input prompt and its training data.

As a result:

- Styles may blend subtly without clear boundaries

- Textures may look convincing but slightly exaggerated

- Certain visual clichés may appear frequently

These traits reflect the data-driven nature of AI learning. The model reproduces what is common and statistically reinforced, not what is conceptually meaningful.

Limitations in understanding texture and style

Despite impressive results, AI has clear limitations. It does not understand texture as a physical property or style as cultural expression. It cannot reason about why a texture feels appropriate or why a style conveys a specific mood.

Common limitations include:

- Over-smoothing of textures at high resolutions

- Repetition artifacts in complex surfaces

- Inconsistent stylistic details across large compositions

These issues arise when statistical patterns conflict or when the model lacks sufficient training examples for a specific visual context.

How prompts influence stylistic interpretation

User prompts act as constraints that steer the model’s internal representations. Descriptive language nudges the AI toward certain regions of its learned style and texture space.

Effective prompts often include:

- References to materials or surfaces

- Descriptors of lighting and atmosphere

- Stylistic adjectives related to mood or era

The AI translates these words into adjustments in probability, not instructions. Understanding this helps explain why prompt wording can dramatically affect output quality.

Style and texture as learned abstractions

Ultimately, AI understands styles and textures as abstractions derived from data. These abstractions are mathematical, not perceptual. They allow the system to generate images that align with human expectations, even though the underlying process is entirely non-human.

This gap between appearance and understanding is central to AI art. The images may look expressive and tactile, yet their creation is rooted in pattern recognition rather than experience or intent.

Where this understanding is heading

As models grow more sophisticated, representations of style and texture are becoming more nuanced. Future systems are likely to handle:

- Finer material distinctions

- More stable long-range stylistic consistency

- Improved control over texture density and variation

These improvements will not come from AI gaining awareness, but from better architectures, richer datasets, and more refined training methods.

Understanding how AI processes styles and textures allows creators and viewers alike to engage with AI-generated art more critically. It reveals why certain results feel compelling, why others feel artificial, and how much of visual creativity can be modeled as structured data without ever becoming human.