How neural networks generate images step by step

Neural networks have transformed image creation from a manual, human-driven craft into a computational process capable of producing realistic, artistic, or entirely novel visuals. From AI-generated paintings to photorealistic illustrations created from text, these systems rely on layered mathematical models trained on vast collections of images. While the results can appear almost magical, the underlying process follows a clear and logical sequence.

This article explains how neural networks generate images step by step, starting with basic concepts and gradually moving toward the advanced mechanisms used by modern AI art systems.

What it means for a neural network to “generate” an image

An image is not understood by a computer as a picture but as numerical data. Each pixel is represented by values describing color and brightness, typically using red, green, and blue channels. Image generation means predicting a structured grid of numbers that, when rendered, form a coherent visual.

Neural networks learn patterns that connect these numbers. Instead of memorizing images, they learn relationships such as edges, textures, shapes, lighting, perspective, and semantic meaning. Image generation occurs when the network uses this learned structure to produce new pixel values that resemble real or artistic images without directly copying any single example.

The training data: learning visual language

Every image-generating neural network begins with training data. This data usually consists of millions or billions of images, often paired with descriptions, labels, or metadata.

During training, the network is exposed to repeated examples of:

- Objects and their shapes

- Colors and shading patterns

- Styles such as photography, painting, or illustration

- Spatial relationships between elements

By processing this data many times, the network learns a visual language. Just as humans learn to recognize patterns in faces or landscapes, the model learns which pixel combinations tend to occur together and which do not.

Step 1: Converting images into numerical representations

Before learning can begin, images must be converted into numbers. Each image is transformed into a grid of pixel values, often normalized so that values fall within a consistent numerical range.

At this stage, the neural network does not see objects like “tree” or “face.” It only sees matrices of numbers. Understanding emerges later through layered processing.

This numerical representation allows the network to perform mathematical operations that gradually extract meaning from raw visual data.

Step 2: Feature extraction through layered processing

Neural networks consist of multiple layers, each designed to detect increasingly complex features.

Early layers typically identify simple patterns such as:

- Edges and contours

- Color gradients

- Basic textures

As information moves deeper into the network, later layers combine these simple features into more abstract concepts:

- Shapes and parts of objects

- Object boundaries

- Style characteristics

This layered structure mirrors human visual perception, where the brain processes simple signals first and complex interpretations later. By the final layers, the network can internally represent high-level concepts like “portrait,” “sunset,” or “sketch style,” even though these are still encoded numerically.

Step 3: Learning probability distributions, not images

A critical idea in image generation is that neural networks do not store images. Instead, they learn probability distributions.

This means the model learns how likely certain pixel arrangements are, given a context. For example, if part of an image contains sky-like colors, the model learns that clouds or gradients are more probable than sharp edges or text.

Image generation then becomes a process of sampling from these learned probabilities, ensuring that the final output follows the statistical structure of real images.

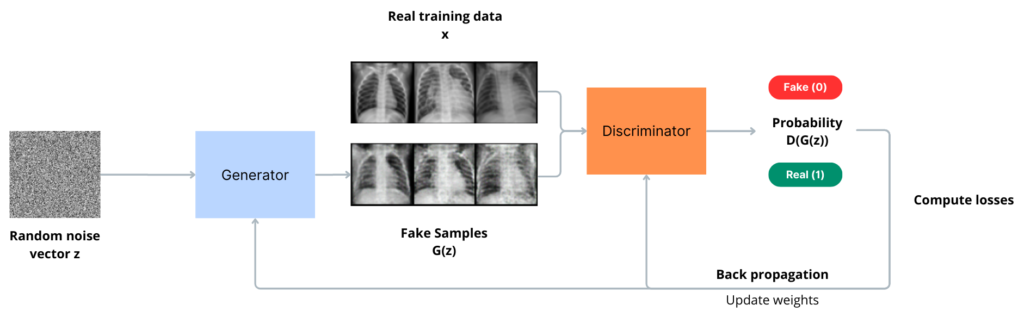

Step 4: Introducing noise or randomness

Most modern image generation systems rely on controlled randomness. This randomness ensures that generated images are not identical and allows creative variation.

Depending on the model type, randomness may be introduced by:

- Starting from random noise

- Sampling from a latent space

- Applying stochastic steps during image refinement

This randomness is not chaotic. It is carefully constrained by the learned patterns so that outputs remain coherent and visually plausible.

Step 5: The role of latent space

Latent space is an abstract, compressed representation of visual information. Instead of working directly with pixels, many neural networks operate in this reduced space.

In latent space:

- Similar images are located near each other

- Style and content can often be separated

- Small changes produce gradual visual differences

When generating an image, the model navigates this space to find a point that corresponds to a meaningful visual concept. That point is then decoded back into pixel values.

Latent space is what allows smooth transitions between styles, objects, and compositions, making it central to creative image generation.

Step 6: Step-by-step image construction in diffusion models

One of the most influential approaches to image generation today is the diffusion process. Instead of creating an image all at once, the model builds it gradually.

The process works in reverse:

- An image is reduced to pure noise during training

- The model learns how to remove small amounts of noise step by step

- During generation, the model starts with noise

- It repeatedly refines the image, adding structure at each step

Early steps establish broad shapes and composition. Later steps refine textures, edges, lighting, and fine details. This gradual construction is why diffusion-based images often appear well-balanced and detailed.

Step 7: Conditioning the generation with text or prompts

When text is involved, the system must align language with visual concepts. This is done through a shared representation between text and images.

The text prompt is converted into a numerical embedding that captures meaning, style, and intent. During image generation, this embedding influences each step, nudging the model toward visuals that match the description.

For example, words related to lighting affect brightness and contrast, while artistic styles influence color palettes and textures. The image emerges as a visual interpretation of the prompt rather than a literal translation.

Step 8: Refinement, consistency, and quality control

As the image takes shape, the neural network continuously evaluates whether each refinement step improves consistency with learned patterns.

Key aspects refined during later stages include:

- Anatomical correctness

- Object boundaries

- Perspective and depth

- Visual coherence

The model balances creativity with realism by constantly adjusting probabilities. This ensures that the final image aligns with both the prompt and the statistical rules learned during training.

Step 9: Decoding into a final image

Once the internal representation is complete, the model decodes it into a full-resolution image. This decoding transforms compressed numerical data back into pixel values.

Post-processing steps may include:

- Color correction

- Resolution upscaling

- Noise reduction

The result is a standard image file that can be viewed, edited, or published like any other digital image.

Why step-by-step generation matters

Generating images incrementally is not just a technical choice. It provides stability, control, and interpretability. Each step contributes a measurable improvement, allowing the system to correct errors and refine details before final output.

This approach also makes it easier to guide generation, apply constraints, and balance realism with artistic expression. It is a key reason modern AI image systems are both flexible and reliable.

The creative implications of neural image generation

Understanding how neural networks generate images reveals that creativity emerges from structure rather than randomness. These systems recombine learned visual patterns in new ways, guided by probability and context.

For artists, designers, and curious observers, this process highlights an important shift. Image creation is no longer limited to direct manual input but has become a collaborative interaction between human intent and mathematical models.

Instead of replacing visual creativity, neural networks redefine it, turning image generation into a dialogue between data, computation, and imagination.